You’ve been at it since 8. You chugged your morning coffee, you set up the perfect eval harness, and you wrote the most beautiful system prompt. You tested and refined almost every word. It’s free of contradictions. It uses words like essential. Your eval scores are a shiny letter A. At 4 PM, you deploy. An hour later, Sally on the consumer team flames you: “NOW THE AGENT IGNORES HALF MY INSTRUCTIONS!?”

WTF.

When asked about “prompting,” many AI engineers think about writing a good system prompt. But that's like thinking of software architecture as writing a good main function. Conversely, “prompting” to a layperson means, “the message I sent the chatbot.” When done well, prompting is actually a multi-layered infrastructure where each component plays a specific role in shaping agent behavior

In this post, I'll break down the four layers of prompting infrastructure we use to build reliable, capable agents at Augment and show you how to use each one effectively.

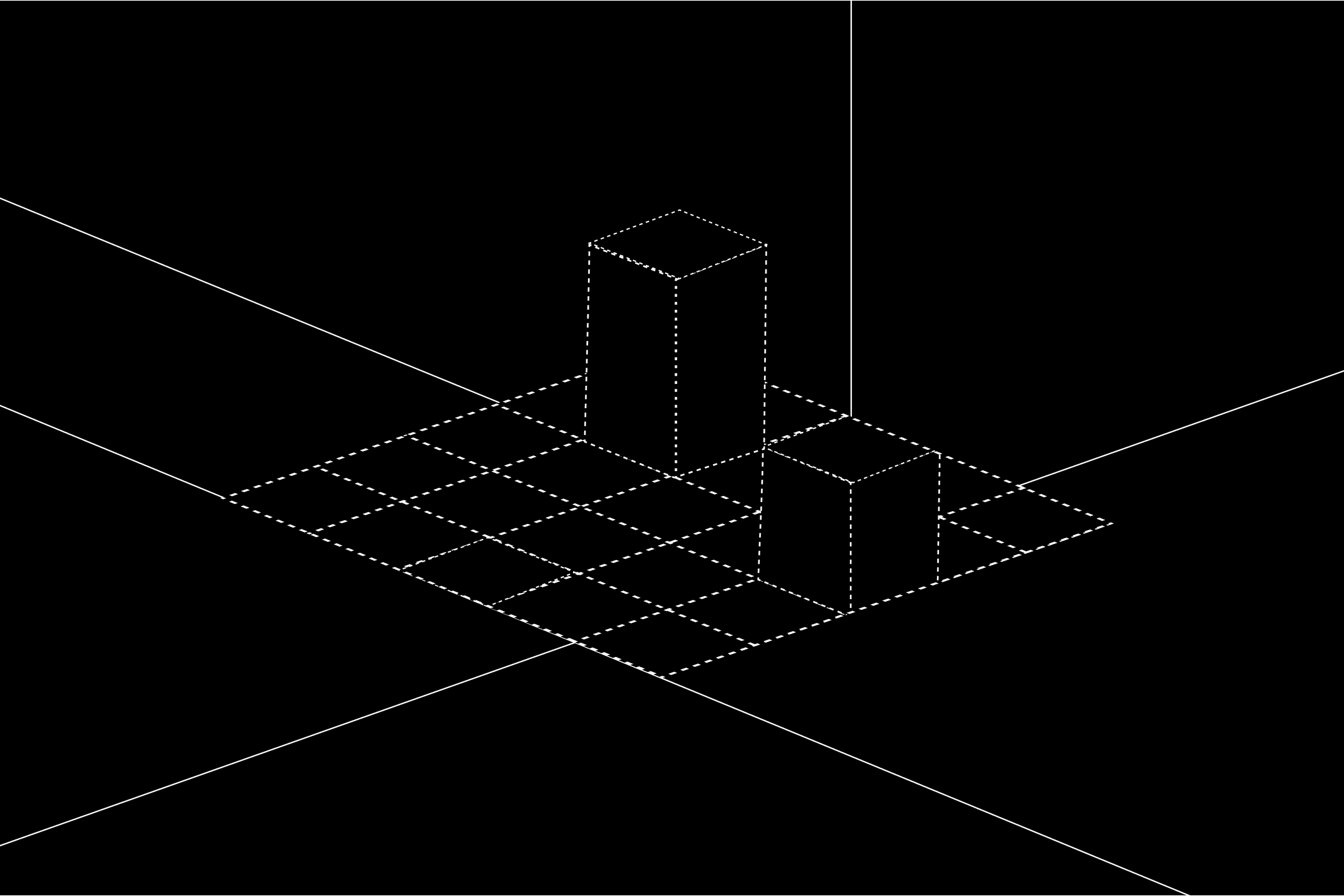

The four layers of prompting infrastructure

Think of prompting like a well-designed codebase: you wouldn’t put all your logic in one file, and you shouldn't put all your agent guidance in one prompt either.

We’ve found that there are four main layers when it comes to prompting. Each has its own specific job, and they work together, building on each other, to deliver the best results. They are

- System prompts: The agent's identity and core behavior

- Tools: What the agent uses and when/how

- Skills and other .md files: Project- or service-specific knowledge and reusable patterns

- User message: The actual request and runtime context

When instructions conflict, the more specific layer generally wins. A user message saying "ignore code style for this prototype" will often override your guidelines about following strict formatting rules. That said, this depends on how strongly you've anchored your system prompt. Critical guardrails written with enough emphasis can and should resist override from other layers. Intentionality is key.

Let's dive into each layer and explore how they work together. The examples in this post are based on real patterns from Augment's production codebase, with some simplified for clarity.

System prompts: your agent's identity

The system prompt is your foundation. It defines who the agent is, its core behavioral patterns, and what matters most. It’s less a comprehensive instruction manual and more as a highlight reel: it tells the agent what to pay attention to across all the other layers.

For example, detailed tool usage instructions generally go in the tools layer (more on that below). But, if a tool is central to how an agent works, it’s worth highlighting in the system prompt. For instance, at Augment, we prompt the agent to favor our context engine tool because it's core to how the agent explores code. But, we don’t include prompting around, say, specific data platforms or other integrations.

We’ve also found that critical instructions should go at the beginning and get reinforced at the end. This takes advantage of primacy and recency effects. LLMs pay more attention to what comes first and what comes last.

📦 PRO TIP: Use clear and weighted instructions

LLMs respond well to emphasis, so don't be afraid to be over the top. Use CAPS LOCK, bold formatting, and words like CRITICAL, NEVER, ALWAYS. "It is CRITICAL that you NEVER modify files without user approval" works better than "Please avoid modifying files."

Also, avoid contradictions. If your system prompt says "be concise" but your guidelines say "provide detailed explanations," the agent will struggle. Keep instructions aligned across all layers. Of course, this is easier said than done, and when in doubt, you can always instruct the system prompt which layer is most important.

Tools: what the agent uses and when

Tools are a fundamental part of how you prompt the agent. This layer has two parts that work together to shape not just what the agent can do, but what it thinks to do.

- Availability prompts through constraint: "You can only use these tools."

- Definitions prompt through description: "Here's what each tool does and when to use it."

Tool availability is prompting through constraints. The combination of a system prompt and a specific set of tools is sometimes called a persona, and it shapes not just what the agent knows, but what it can do. At Augment, we define different personas by giving agents different tools based on their purpose:

- Research agent:

view,codebase-retrieval,web-search,web-fetch(read-only exploration) - Code agent:

str-replace-editor,save-file,view,codebase-retrieval(editing capabilities) - Plan agent:

save-file,view,codebase-retrieval(planning and documentation)

📦 PRO TIP: Treat tool descriptions as prompts

The tools you don't give an agent are as important as the tools you do give.

For example, to get the LLM to use our context engine effectively, we removed the grep tool. With grep available, the agent would default to it. Without grep, it was forced to use the more powerful context engine and we saw the corresponding jump in evals and user feedback.

For our security review agent, the tool set looks like this:

- ✅ view - Read files

- ✅ codebase-retrieval - Search for patterns

- ✅ trivy-scan - Scan for known CVEs in dependencies

- ❌ grep-search - Removed (forces use of the more powerful context engine)

- ❌ str-replace-editor - NO editing (read-only reviewer)

- ❌ save-file - NO file creation

Knowing which tools to use isn’t enough – the agent needs to know when and how. That’s where tool definitions come in, almost acting as a hidden prompt. Tool descriptions are serialized into the LLM's context and teach the agent how to think about problems.

At Augment, each tool has three components that influence agent behavior:

- Name: what the tool is called (should be self-explanatory)

- Description: what it does and when to use it (shown to the model)

- Input schema: what parameters it accepts, with descriptions for each

The more specific and directive your tool descriptions, the better the agent will understand when to use each tool. Write tool descriptions like you're onboarding someone on when and how to use the tool. Instead of "Searches code" write "Search the codebase for specific patterns, function names, or code snippets. Use this when you need to find where something is defined or used across multiple files."

Compare a minimal versus a directive tool definition in the example below.

The second version tells the agent when to use it (before reviewing code), what it returns (vulnerabilities with severity ratings), and why (to identify dependency-level risks). That context shapes how the agent plans its review.

📦 PRO TIP: Highlight critical tools in your system prompt

Tool definitions aren't the only place to teach tool usage. For your most important tools, add a dedicated section in the system prompt with concrete do and don't examples. At Augment, we include a block like this for our context engine:

Skills and files: how the agent works on *this* project

This layer is where you add context that's specific to your project, team, or domain. Unlike the system prompt (which is general) or tools (which are specific to capabilities), guidelines and skills are knowledge, conventions, and reusable capabilities scoped to a narrower domain or context (your project, a certain circumstance, a directory, etc).

At Augment, we support multiple customization mechanisms that compose together. Worth noting: These layer with clear precedence. So workspace rules override defaults, user rules override workspace rules, etc.

- Templates: pre-built behavioral for common use cases; for example, “prototyper”

- Workspace guidelines: project-specific conventions

- User rules: personal preferences and coding style

- Skills: reusable capabilities in XML format (following the agentskills.io spec)

📦 PRO TIP: Build infrastructure that scales

Guidelines and skills should be reusable across projects and shareable across teams. But how do you know which ones to build? At Augment, we've started analyzing agent trajectories and codebase smells to identify places where agents struggle repeatedly because a skill or guideline is missing. Build the infrastructure that your agents are telling you they need.

The user message: the actual request

The user message is where the rubber meets the road: the immediate task, plus runtime context and metadata. They might be plain text requests, like "Fix the authentication bug,” but they can also include:

- Structured nodes: Text, images, tool results, code snippets

- Context markers: Canvas IDs, file references, linked documents

- Metadata: Silent messages, timestamps, conversation threading

The quality of the user message matters more than most people think. A vague request forces the agent to guess while a specific one lets it execute. This can be an area where examples pay dividends. Instead of saying "Write clean code," show an example of what clean code looks like in your codebase.

Notice how this narrows the agent's focus. The system prompt says to review for security issues broadly, the skill covers SQL injection patterns, and trivy-scan handles dependencies, but the user message tells the agent where to look first. The agent should listen.

📦 PRO TIP: Let tooling do the heavy lifting

The user message is a layer where tooling can help. At Augment, we built a prompt enhancer that takes conversation history and codebase context into account and rewrites user messages to be clearer, more specific, and less ambiguous before they reach the agent. It's a small step that meaningfully improves output quality, and it's a good example of treating even the user message as infrastructure rather than raw input.

How the layers work together

Let's see how all four layers reinforce each other. You've seen pieces of our security review agent throughout this post. Here's how they compose:

Layer 1: system prompt

Layer 2: tools

Layer 3: skills

Where SECURITY_REVIEW.md contains:

Layer 4: user message

📦 PRO TIP: Repetition is your friend

If something is important, have it appear in multiple places: system prompt, guidelines, tool descriptions, and even tool results. The more frequently an important point shows up from different sources, the more likely the LLM will follow it.

In our example, the SQL injection requirement appears in three layers:

- System prompt (Layer 1): "It is CRITICAL that you flag any potential SQL injection vulnerabilities"

- Guidelines (Layer 3): Detailed examples and patterns to look for

- Tool definitions (Layer 2): trivy-scan description mentions scanning for "security vulnerabilities"

Put it into practice

Prompting isn't a single text field. It's a multi-layered system that acts as infrastructure. At Augment, we've found that thinking about prompting as infrastructure has made our agents more reliable, more maintainable, and easier to debug.

Embrace this approach by designing your layers intentionally, thinking about which layer overrides which. Then use the right layer for the right job. Each layer has different mechanisms for influencing agent behavior, each with its own strengths and use cases. Get your system prompt right, choose your tools carefully, and add guidelines as needed. As your agents get more complex, you'll naturally develop more sophisticated layering strategies. And, in this space, everything is an experiment! Try things, and let data from the results inform your approach.

📦 PRO TIP: Version your prompts like you version your code

A prompt that works perfectly with one model may fail with another. Version your prompts and pin them to specific models, just like you'd pin a dependency version. This lets you upgrade models confidently, A/B test prompt variations, and roll back when something breaks. Think system-prompt-v3-sonnet-4 not just system-prompt.

The future of AI engineering isn't just about better models, it's about better infrastructure for working with those models. Prompting infrastructure is a key part of that future. If this is a challenge you’re tackling at your company, I’d love to hear about it.

Written by

Nikita Sirohi

Technical Lead

Nikita Sirohi is a Tech Lead at Augment, where she works on the agent platform and ecosystem integrations that power Augment's AI-driven workflows — including the prompting infrastructure described in this post. She previously built and scaled Augment's self-serve business. Before Augment, she was a Tech Lead at Ghost Autonomy (autonomous vehicles) and Pure Storage (distributed storage). Caltech CS '17, Mabel Beckman Prize.