The open-source AI code review tools worth evaluating in 2026 fall into three categories: mature static analyzers like SonarQube Community Edition for established quality gates; self-hosted options like Tabby and PR-Agent for teams requiring data sovereignty; and lightweight GitHub Actions like villesau/ai-codereviewer for quick automation experiments.

TL;DR

Open source AI code review tools deliver genuine value for data sovereignty and cost control, but most stop at file-level checks. SonarQube remains the most reliable foundation. Self-hosted options like Tabby and PR-Agent require at least 8GB VRAM and multi-week deployment timelines. Matching tool capabilities to actual team constraints matters more than feature checklists.

See how Context Engine handles complex reviews.

Free tier available · VS Code extension · Takes 2 minutes

After testing these tools across enterprise codebases, critical limitations emerged that undermine their value. Teams should allocate substantial time for validating AI-generated code outputs. Industry analysis of 470 pull requests found AI-generated code contained 1.7x more defects than human-written code, while Veracode's testing of 100+ LLMs revealed 45% of AI-generated code samples introduced OWASP Top 10 vulnerabilities.

AI code review tools must validate increasingly problematic AI-generated output while consuming a disproportionate amount of senior engineers' time. Faros AI analysis found that while code generation increased by 2 to 5x, review time increased by 91%, and PR size grew by 154%, resulting in flat net delivery time. Despite these challenges, specific tools deliver measurable value when matched to appropriate use cases.

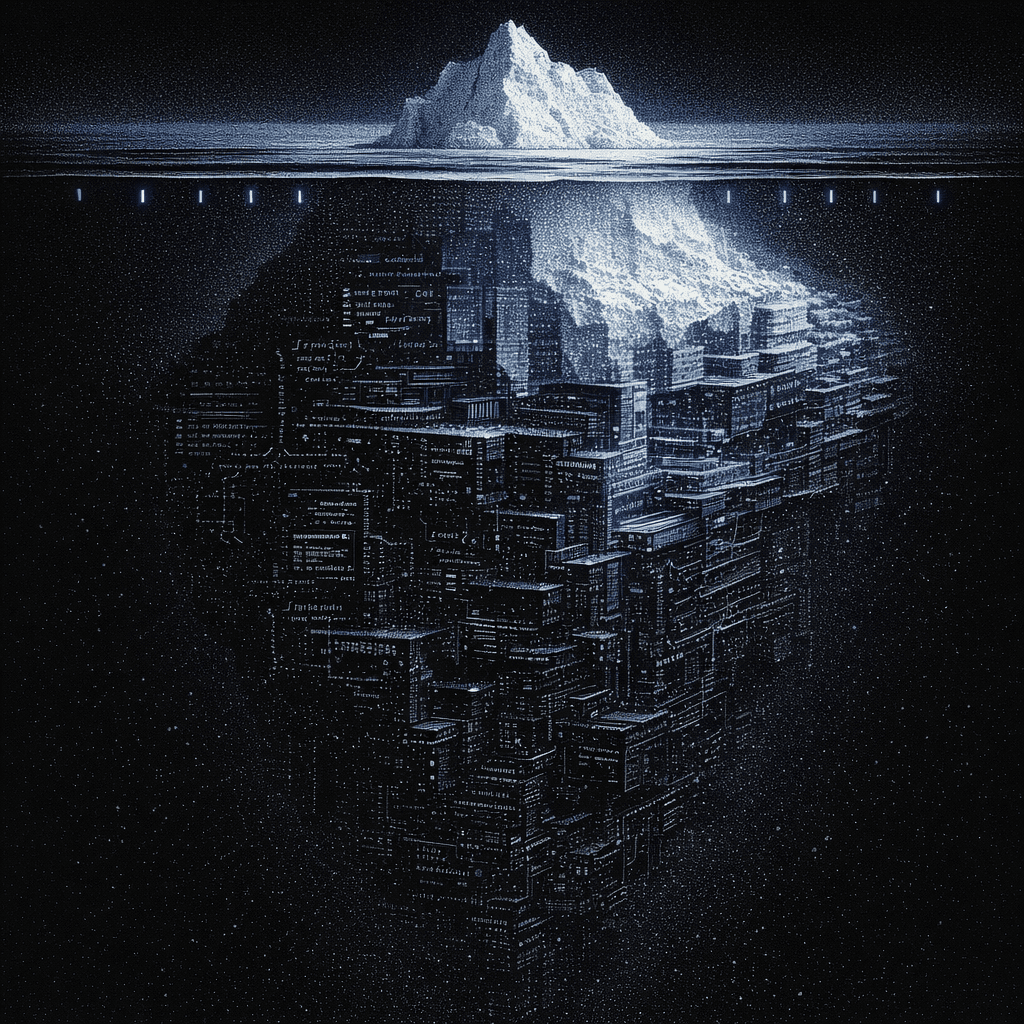

Real codebases are years of good intentions, architectural compromises that made sense at the time, and the accumulated decisions of developers who've since moved on. You know this if you've ever spent a morning grep-ing through hundreds of thousands of files trying to understand how authentication actually works.

The promise of AI code review is compelling: automated detection of bugs, security vulnerabilities, and architectural violations before they hit production. But new AI code review tools launch almost every week. Will they catch the bugs that matter, or just create noise that teams learn to ignore?

As an engineer who's evaluated dozens of these tools on enterprise monorepos with 400K+ files, I know exactly which ones deliver and which ones disappoint. The commercial landscape dominates enterprise AI code review, with CodeRabbit, Greptile, and Graphite Agent capturing the majority of market share. Open source alternatives cluster around traditional static analysis or early-stage projects with documentation gaps. For teams that need review beyond file-level analysis, Augment’s Intent workspace coordinates multiple specialist agents against a living specification, with a Verifier agent that validates implementation against architectural contracts before code reaches human review.

I evaluated 10 open source options across the messiest, most realistic scenarios I could find, not the clean examples from their marketing sites.

Top 10 Open Source AI Code Review Tools at a Glance

While GitHub star counts look good on marketing pages, they don't predict whether an AI code review tool will catch the architectural violations that actually cause production failures. I didn't waste time evaluating these tools on clean, well-documented codebases.

Each tool was evaluated across six criteria that matter for enterprise teams:

- Self-hosting capability: Can you keep code on your infrastructure?

- GitHub integration: Native workflows or bolt-on complexity?

- GitLab integration: Critical for teams not on GitHub

- Polyglot support: Real coverage or marketing claims?

- Model flexibility: Locked to one provider or configurable?

- Production maturity: Battle-tested or experimental?

Here's how the 10 leading open source AI code review tools stack up:

| Tool | Self-Hosted | GitHub | GitLab | AI-Powered | Best For |

|---|---|---|---|---|---|

| SonarQube Community | Yes | Yes | Yes | No (Rule-based) | Quality gates foundation |

| PR-Agent | Yes | Yes | Yes | Yes (Ollama) | Data sovereignty |

| Tabby | Yes | Yes | Yes | Yes (Local models) | Self-hosted AI assistance |

| villesau/ai-codereviewer | No | Yes | No | Yes (OpenAI) | Lightweight experiments |

| Hexmos LiveReview | Yes | No | Yes | Yes (OGitHub security scanningllama) | GitLab-native teams |

| Semgrep | Yes | Yes | Yes | No (Rule-based) | Custom security rules |

| CodeQL | Partial | Yes | No | No (Rule-based) | GitHub security scanning |

| cirolini/genai-code-review | No | Yes | No | Yes (OpenAI) | Quick setup |

| Kodus AI | Yes | Yes | Unknown | Yes (Agent-based) | Agent-based review workflows |

| snarktank/ai-pr-review | GitHub Actions | Yes | No | Yes (Claude) | Anthropic integration |

How These Tools Were Tested

Most comparison articles test AI code review tools on clean codebases with perfect documentation and modern patterns. That's not reality for teams managing legacy systems and distributed architectures.

Over 40+ hours, I used each tool on a polyglot monorepo with 450K+ files spanning Python, TypeScript, Java, and Go. This environment represents the messy reality of enterprise development: inconsistent patterns, missing documentation, and architectural decisions made by engineers who left years ago.

I focused on three scenarios that expose real limitations:

- Cross-service dependency detection: Can it identify breaking changes across microservice boundaries?

- Legacy code understanding: Does it respect existing architecture or suggest rewrites?

- False positive rate: How much noise versus signal in production CI/CD?

Why this matters: Most tools perform well on isolated file review. Enterprise teams need tools that handle architectural context across hundreds of thousands of files. Cortex's 2026 benchmark report found that incidents per pull request increased by 23.5% year-over-year, even as PRs per author increased by 20%, underscoring the gap between code generation speed and review quality.

1. SonarQube Community Edition

Ideal for: Enterprise teams needing established quality gates across Python, TypeScript, Java, Go, and Rust in polyglot monorepos; organizations with existing CI/CD infrastructure; and teams prioritizing predictable, rule-based detection over AI-based probabilistic analysis.

SonarQube Community Edition remains the most mature open source option for code quality enforcement, with approximately 10,300 GitHub stars and proven enterprise adoption. The latest release, v26.2.0 (February 2026), added 29 new Python async rules and 16 FastAPI security rules. The tool provides static analysis across 21 languages without AI-powered contextual understanding, which turns out to be an advantage: predictable rule-based detection produces fewer false positives than probabilistic AI reviewers.

Notable update since mid-2025: SonarQube added Rust language support (v25.5.0) with 85 rules, Code Coverage import, and Clippy output integration. Teams should also note that JDK 21 is now required as of v26.1.0, with Java 17 support ending July 2026.

What was the testing outcome?

After running SonarQube on our 450K-file monorepo, the results were exactly what I expected: reliable, predictable, and boring in the best possible way.

SonarQube caught formatting inconsistencies, OWASP Top 10 vulnerabilities, and code smells with near-zero false positives. The Community Edition supports running analysis across large repositories, making it a practical fit for teams managing complex, multi-component codebases.

Cross-service scenarios exposed the fundamental limitation. SonarQube missed architectural drift, breaking changes across service boundaries, and complete misalignment of requirements. The pattern became clear: excellent for file-level quality, blind to architectural context.

What's the setup experience?

Self-hosted deployment with Docker Compose requires infrastructure provisioning and CI/CD integration. Setup isn't instant: estimated timeframes range from 6 to 13 weeks per DX's implementation framework, which outlines a 30-60-90-day phased rollout for enterprise AI code analysis tools.

Monorepo support requires explicit per-project configuration rather than automatic detection. This adds complexity but produces reliable results once configured.

SonarQube Community Edition pros

- 20+ years of battle-tested stability: Comprehensive documentation, active community forums, and established enterprise adoption patterns make it the lowest-risk starting point.

- Predictable rule-based detection: No AI hallucinations, no probabilistic guessing. When SonarQube flags something, it's based on deterministic rules you can audit and customize.

- Solid polyglot support: 21 languages with consistent quality gates, including recently added Rust analysis with 85 rules.

- Zero licensing fees: LGPL-3.0 for the community edition. Infrastructure costs exist, but no per-seat charges.

SonarQube Community Edition cons

- Architectural blindness: Catches file-level issues but misses how changes affect dependent services. For teams where cross-service breaking changes are a real production risk, Augment Code's Context Engine maps semantic dependencies across 400,000+ files, providing the architectural layer SonarQube can't.

- Not AI-powered: Requires complementary solutions for contextual analysis. Consider Semgrep for custom security rules and Ollama-powered review tools for AI-driven insights.

- JDK 21 now required: Teams on Java 17 must plan migration before the July 2026 deprecation deadline.

- Monorepo configuration overhead: Requires explicit per-project setup. Not seamless out of the box.

Pricing

- Community Edition: Free, self-hosted (LGPL-3.0)

- Infrastructure costs: Variable based on team size; plan for compute, storage, and CI/CD runner costs

- Engineering time: 6 to 13 weeks for initial setup, ongoing maintenance

Verdict on SonarQube Community Edition

Choose SonarQube if: Established, predictable quality gates matter most, and the team has infrastructure expertise for self-hosted deployment.

Skip it if: AI-powered contextual review or cross-service architectural analysis is required. SonarQube is a foundation, not a complete solution.

2. PR-Agent (Qodo)

Ideal for: Security-sensitive teams in regulated industries requiring complete data sovereignty, organizations with existing self-hosted infrastructure, and teams willing to invest significant configuration time for zero external API calls.

PR-Agent is an actively maintained open source AI code review tool with 10,500 stars, 1,300 forks, and 200 contributors. The latest release, v0.32 (February 2026), added support for Claude Opus 4.6 and Gemini-3-pro-preview, and fixed GPT-5 reasoning_effort parameter handling. The project is currently being donated to an open-source foundation, with its first external maintainer recently appointed, signaling a move toward community governance.

What was the testing outcome?

I tested PR-Agent, expecting straightforward Ollama integration. What I found was configuration headaches that consumed a disproportionate amount of evaluation time.

The promise of air-gapped deployment is real in theory. Ollama support has been merged into the codebase. However, critical configuration bugs undermine self-hosted deployments in practice.

GitHub Issue #2098 documents the tool defaulting to hardcoded models (gpt-5-2025-08-07, o4-mini) even when custom OpenAI-compatible endpoints are configured via .env files. Issue #2083 shows the Gemini model configuration being completely ignored. Both issues have been open for 4+ months with no resolution as of March 2026, directly blocking local LLM and alternative model deployments.

The pattern became clear: data sovereignty is the goal, but teams should budget significant time for configuration troubleshooting. These aren't minor annoyances. They are blockers for air-gapped and multi-model use cases.

What's the setup experience?

If PR-Agent needs to talk to an Ollama instance bound to localhost, self-hosted GitHub Actions runners with Ollama installed are required. Jobs running in separate containers on GitHub-hosted runners cannot reach localhost services.

The setup timeline ranges from 6 to 13 weeks per DX's implementation framework, including infrastructure provisioning, integration development, and security review. This isn't a weekend project.

PR-Agent pros

- True data sovereignty goal: Zero external API calls when properly configured. Code stays on your infrastructure.

- Active development and governance transition: v0.32 (February 2026) with ongoing model support additions and a move toward foundation-based community ownership.

- AGPL-3.0 license: No features have been commercialized from the open source codebase. Qodo Merge is a separate commercial offering.

PR-Agent cons

- Configuration reliability issues: Issues #2098 and #2083 remain unresolved after 4+ months, blocking local LLM and alternative model configuration. Budget significant debugging time.

- Self-hosted runner requirement: GitHub-hosted runners can't access localhost Ollama. Additional infrastructure complexity.

- Model quality variance: Performance depends heavily on model selection and proper endpoint configuration.

Pricing

- Software: Free, open source (AGPL-3.0)

- GPU infrastructure: Minimum 8GB VRAM for CodeLlama-7B per Tabby's official hardware FAQ

- Engineering time: 6 to 13 weeks deployment, ongoing maintenance

Verdict on PR-Agent

Choose PR-Agent if: Data sovereignty is non-negotiable for compliance reasons, and there is DevOps capacity for extended configuration work. Monitor Issues #2098 and #2083 for resolution before committing to local LLM deployment.

Skip it if: Reliable out-of-the-box local model functionality is required or dedicated infrastructure expertise is limited.

3. Tabby

Ideal for: Teams prioritizing data control with GitLab SSO integration, organizations with existing GPU infrastructure, and developers wanting self-hosted AI coding assistance without cloud dependencies.

Tabby provides self-hosted AI coding assistance with no dependency on external databases or cloud services. With 33,000 GitHub stars, 1,700 forks, and 249 total releases, it is the most actively developed project in this list. The latest release, v0.32.0 (January 25, 2026), demonstrates consistent release velocity. The University of Toronto published a verified Docker Compose configuration for production deployment, demonstrating institutional adoption beyond hobbyist experimentation.

What was the testing outcome?

What stood out during the Tabby evaluation was a code-completion tool with review features in a supporting role.

The tool supports GitHub and GitLab repository integrations with documented SSO options for GitHub and Google OAuth. Its Rust-based architecture (92.9% Rust) prioritizes performance for code assistance workloads.

Tabby's architecture prioritizes coding assistance over dedicated code review. Review features exist, but feel secondary. In PR workflows, the suggestions were completion-oriented rather than review-oriented.

What's the setup experience?

Per LocalAI Master's hardware analysis, 8GB VRAM handles CodeLlama-7B with Q4_K_M quantization; 16GB VRAM handles 13 B to 14B models; and 32GB+ is recommended for enterprise-grade 13B to 34B parameter models serving concurrent users.

Infrastructure costs scale with model size. Initial indexing took about 30 minutes on the monorepo used for evaluation.

Tabby pros

- Self-contained architecture: No external database or cloud service dependencies. Your infrastructure, your control.

- Highest release velocity: 249 releases and 33,000 stars indicate strong community investment and rapid iteration.

- Institutional validation: The University of Toronto deployment guide provides a verified production configuration.

Tabby cons

- Code assistance focus: Review features are secondary to completion capabilities. May not meet dedicated review requirements.

- GPU requirements of 8GB or more of VRAM create hardware barriers for some teams.

- SSO limitations: Officially documented SSO is limited to GitHub and Google OAuth. GitLab SSO requires workarounds.

Pricing

- Software: Free, open source

- GPU infrastructure: 8GB VRAM minimum, scaling to 32 to 80GB for concurrent users

- Compute costs: Cloud GPU alternatives range from $1,000–1,500/month for A100 and $2,200–3,000/month for H100 spot instances

Verdict on Tabby

Choose Tabby if: Self-hosted AI coding assistance is the priority and GPU infrastructure is already available. Code completion comes first, with review as a bonus.

Skip it if: Dedicated code review workflows are the main requirement. Tabby's assistance-first architecture may not fit review-focused needs.

4. villesau/ai-codereviewer

Ideal for: Small teams starting AI code review experiments with minimal infrastructure investment, organizations already using OpenAI APIs, and developers looking for quick setup via native GitHub Actions.

With approximately 1,000 GitHub stars and 882 forks, villesau/ai-codereviewer has the highest community adoption among open source GitHub Actions options. Native workflow integration means setup requires only adding a workflow file rather than deploying infrastructure.

Important caveat: The last release was December 2, 2023, and it targets the gpt-4-1106-preview model. Teams should verify API compatibility with current OpenAI model offerings before adopting.

What was the testing outcome?

After working with villesau/ai-codereviewer, the results matched exactly what lightweight GitHub Actions usually promise: easy setup, decent results, and significant validation overhead.

The tool uses OpenAI's GPT-4 to generate reviews, providing stronger contextual understanding than rule-based static analysis. On the test PRs, it caught logic errors and suggested improvements that grep-based tools missed.

Then the false positives appeared. Roughly one-third of suggestions required human verification to determine relevance. This aligns with broader findings: Anthropic's 2026 report found that engineers can fully delegate only 0 to 20% of AI-assisted tasks, despite using AI in approximately 60% of their work.

What's the setup experience?

Setup took under an hour. Add a workflow file, configure an OpenAI API key as a secret, and the tool is running. No infrastructure provisioning, no GPU requirements, and no Docker deployments.

The tradeoff: code leaves your infrastructure. Every PR diff goes to OpenAI's API for analysis.

villesau/ai-codereviewer pros

- Fastest setup: Only workflow file configuration. No infrastructure to provision or maintain.

- Highest community adoption: ~1,000 stars and 882 forks indicate active usage and the availability of troubleshooting resources.

- GPT-4 quality: Stronger contextual understanding than rule-based alternatives.

villesau/ai-codereviewer cons

- Stale maintenance: No release in 26+ months. The pinned model version (gpt-4-1106-preview) may cause API compatibility issues.

- External API dependency: Code leaves your infrastructure. Not suitable for security-sensitive teams.

- Validation overhead: AI suggestions require human verification. Budget time for false positive triage.

Pricing

- Software: Free, open source

- OpenAI API costs: Variable based on PR volume and diff size

- Alternative: Managed SaaS like CodeRabbit at $12/user/month (Lite tier) offers predictable pricing; for enterprise teams needing cross-repository context and SOC 2 Type II compliance, Augment Code is the stronger fit

Verdict on villesau/ai-codereviewer

Choose it if: Fast experimentation with AI code review matters, the code is not security-sensitive, and OpenAI API costs are acceptable. Verify the tool still works with current OpenAI model versions before committing.

Skip it if: Data sovereignty matters, active maintenance is required, or predictable costs at scale are important.

5. Hexmos LiveReview

Ideal for: GitLab-native teams underserved by GitHub-focused tools, organizations requiring self-hosted Ollama deployment, and teams with data sovereignty requirements on GitLab workflows.

Hexmos LiveReview is an AI code review tool for GitLab that supports Ollama models. The official product page describes it as offering free, unlimited AI code reviews that run on commit with git hook integration.

Licensing note: Hexmos LiveReview uses a custom source-available license rather than a standard OSI-approved open source license. Enterprise legal teams should review the license terms before adoption.

What was the testing outcome?

During evaluation, Hexmos LiveReview proved interesting precisely because most AI code review tools are primarily designed for GitHub, leaving GitLab users underserved.

The tool has 22 GitHub stars, 3 forks, and 717 commits with active development. Feature development continued between September 2025 and February 2026, though no formal releases have been published, only tags. Its Go-based architecture integrates via git hooks for commit-level code reviews.

What's the setup experience?

A self-hosted Ollama deployment requires GPU infrastructure, typically with at least 8GB of VRAM. Integration with existing GitLab CI/CD pipelines adds engineering time consistent with other self-hosted deployments in this list.

Hexmos LiveReview pros

- GitLab-native design: Built specifically for GitLab workflows, not a GitHub tool with GitLab support bolted on.

- Self-hosted Ollama: Data stays within your infrastructure.

- Active commits: 717 commits indicate ongoing development.

Hexmos LiveReview cons

- Source-available, not OSI-approved open source: Custom license may be a blocker for enterprise legal review.

- Limited adoption: 22 stars and 3 forks. Fewer community troubleshooting resources than established alternatives.

- No formal releases: Tags exist, but no published releases, making version management difficult.

- GPU requirements: Same 8GB VRAM minimum as other self-hosted options.

Pricing

- Software: Free (source-available license; review terms for commercial use)

- GPU infrastructure: Minimum 8GB VRAM

- Engineering time: Variable for GitLab CI/CD integration

Verdict on Hexmos LiveReview

Choose it if: A GitLab-native workflow matters, GPU infrastructure for self-hosted Ollama is available, and the legal team approves the source-available license.

Skip it if: Extensive community support, formal release management, or a standard open source license is required.

For teams needing architectural context beyond what file-isolated tools provide, Augment Code's Context Engine analyzes 400,000+ files using a semantic dependency graph. Intent takes this further: its Verifier agent validates every PR against a living specification that captures cross-service contracts, catching breaking changes that file-level review tools miss entirely.

Catch cross-service breaking changes that file-level tools miss.

Free tier available · VS Code extension · Takes 2 minutes

in src/utils/helpers.ts:42

6. Semgrep

Ideal for: Security-focused teams requiring custom rules specific to organizational coding standards, organizations with dedicated security engineering capacity, and teams needing pattern-based scanning across polyglot environments.

Semgrep's pattern-based scanning allows teams to write and enforce security or code-quality best practices specific to their stack.

Licensing update: In 2024, Semgrep split its licensing model. Per the official announcement, the core scanning engine remains open source under LGPL 2.1, but the Semgrep-maintained rules have moved to a proprietary Semgrep Rules License v.1.0 that restricts use to internal, non-competing, and non-SaaS contexts. Individual developers and companies using Semgrep for internal security scanning are unaffected, but commercial or SaaS use cases should review the license terms. Semgrep OSS has been rebranded to Semgrep Community Edition.

What was the testing outcome?

I evaluated Semgrep for its custom rule engine and found exactly what its reputation promises: a powerful pattern scanner that requires dedicated security investment to realize its potential.

The tool integrates with GitHub, GitLab, and CI/CD pipelines through standard workflows. Security teams often prefer Semgrep for developer-centric workflows that catch OWASP Top 10 vulnerabilities without the noise generated by generic scanners. Recent updates include OWNERS/CODEOWNERS file integration, improved parsing of composer.lock and tsconfig.json, and support for the uv package manager.

On the monorepo used for evaluation, Semgrep's custom rules caught organization-specific patterns that off-the-shelf tools missed. The tradeoff: writing those rules took dedicated security engineering time.

What's the setup experience?

The Community Edition eliminates per-seat fees. Self-hosted deployments require infrastructure investment and maintenance labor, about 0.25 to 0.5 FTE for enterprise deployments. The commercial AppSec Platform starts at $40/month per contributor for teams wanting managed rules and SCA capabilities.

Custom rule development requires dedicated engineering time for production deployment.

Semgrep pros

- Custom rule flexibility: Write rules specific to your organization's patterns and security requirements.

- Developer-centric workflows: Catch OWASP Top 10 without excessive noise.

- Broad integration: Works with GitHub, GitLab, and standard CI/CD pipelines.

Semgrep cons

- Split licensing model: Engine is LGPL-2.1, but Semgrep-maintained rules use a proprietary license that restricts commercial and SaaS use.

- Learning curve: Pattern-based rule development requires dedicated security engineering capacity.

- Rust support is partial: Custom development is needed for comprehensive Rust coverage.

- Not AI-powered: Traditional static analysis, not contextual AI review.

Pricing

- Community Edition: Free (LGPL 2.1 engine; proprietary rules license)

- AppSec Platform: $40/month per contributor

- Maintenance: 0.25 to 0.5 FTE for enterprise self-hosted deployments

Verdict on Semgrep

Choose Semgrep if: Security engineering capacity exists to develop custom rules and pattern-based detection specific to the stack is needed. Review the rules and license if a commercial product is involved.

Skip it if: Dedicated security engineering resources are not available or if contextual AI review matters more than pattern matching.

7. CodeQL

Ideal for: Teams already using GitHub Advanced Security, organizations needing sophisticated semantic security analysis, and GitHub-native workflows requiring minimal additional configuration.

CodeQL is positioned as a GitHub-native static analysis tool. CodeQL requires GitHub Advanced Security licensing to analyze private repositories at scale.

What was the testing outcome?

The result was excellent security scanning gated behind licensing requirements.

For teams already using GitHub Advanced Security, CodeQL integration requires minimal additional configuration. The CodeQL Action (MIT license, 1.5k stars) enables automated security scanning for every PR, using CodeQL Bundle v2.24.3, which was released in March 2026, confirming active maintenance.

The semantic analysis quality is strong. CodeQL caught vulnerabilities that simpler pattern-based tools missed. The tradeoff: private repository analysis at scale requires paid licensing.

What's the setup experience?

Free for public repositories and open source query development. Private repository scanning requires GitHub Advanced Security licensing, and pricing varies by organization and requires direct sales engagement.

Teams with established GitHub Actions workflows can integrate within 2 to 4 hours for basic configuration. Full organization deployment typically requires 4 to 6 weeks, including the pilot phase.

CodeQL pros

- Sophisticated semantic analysis: Catches vulnerabilities that pattern-based tools miss.

- GitHub-native integration: Minimal configuration for teams already on GitHub.

- Free for public repos: Open source projects can use all capabilities.

CodeQL cons

- Licensing requirements: Private repository analysis requires GitHub Advanced Security.

- GitHub lock-in: Teams using GitLab, Bitbucket, or self-hosted Git need alternatives.

- Not AI-powered: Rule-based semantic analysis, not contextual AI review.

Pricing

- Public repositories: Free

- Private repositories: GitHub Advanced Security licensing required, pricing not publicly disclosed

- Alternative: SonarQube Community Edition provides a broader platform support without licensing

Verdict on CodeQL

Choose CodeQL if: GitHub Advanced Security is already in place, and sophisticated security scanning with minimal setup is the goal.

Skip it if: GitLab or other platforms are in use, or Advanced Security licensing costs are hard to justify.

8. cirolini/genai-code-review

Ideal for: Teams looking for a quick GitHub Actions setup with GPT model flexibility, organizations comfortable with an OpenAI API dependency, and developers experimenting with AI code review before larger investments.

Listed on the GitHub Actions Marketplace, cirolini/genai-code-review supports GPT-3.5-turbo and GPT-4 models. It has 366 stars, 72 forks, and is used by 120 repositories. The latest release (v2) was published in May 2024, meaning the tool has been without updates for nearly a year as of early 2026.

What was the testing outcome?

What emerged was comparable functionality to villesau/ai-codereviewer, with model flexibility as the key differentiator.

The 98.3% Python codebase, with only 2 primary contributors, means that fixes and updates depend on a very small pool of maintainers. With just 6 total releases and 53 commits, the project's longevity is heavily tied to continued interest from its handful of contributors.

What's the setup experience?

Initial setup typically takes 2 to 4 hours for basic configuration. Infrastructure costs are minimal beyond API usage.

cirolini/genai-code-review pros

- Model flexibility: Choose between GPT-3.5 Turbo and GPT-4 based on cost-benefit and quality needs.

- GitHub Marketplace listing: Indicates community validation beyond personal projects.

- Quick setup: 2 to 4 hours to production for basic configuration.

cirolini/genai-code-review cons

- Approaching staleness: Last release May 2024, approaching one year without updates.

- External API dependency: Same data sovereignty concerns as other OpenAI-based tools.

- Limited contributor base: Only 2 primary contributors. Risk of abandonment.

- No self-hosting: API-only architecture.

Pricing

- Software: Free, open source

- OpenAI API: Variable based on model choice and usage volume

- GPT-3.5-turbo: Significantly cheaper than GPT-4 for cost-sensitive teams

Verdict on cirolini/genai-code-review

Choose it if: An OpenAI-powered review with cost optimization through model selection is the goal, and the maintenance risk is acceptable.

Skip it if: Data sovereignty matters, active maintenance is required, or a self-hosted deployment is needed.

9. Kodus AI

Ideal for: Teams interested in agent-based review approaches with active self-hosted deployment options, organizations evaluating the latest paradigm in AI code review, and developers wanting a tool with a rapid release cadence.

Kodus AI is an open-source AI agent that reviews code with an agent-based architecture. With 976 stars, 89 forks, and 129 total releases, Kodus is in active development. The latest self-hosted release, 2.0.22, was published March 9, 2026, and the repository contains 3,018 commits across a TypeScript monorepo structure (apps/, libs/, packages/).

What was the testing outcome?

What I noticed during evaluation was a more mature project than expected, with rapid iteration and a structured monorepo architecture. The agent-based framing reflects the broader industry movement toward autonomous code review systems.

However, documentation on polyglot monorepo capabilities and production-scale benchmarks remains limited. The rapid release cadence (129 releases) suggests active development but also potential instability between versions.

What's the setup experience?

Kodus AI supports self-hosted deployment. Teams should budget evaluation periods and expect to reference GitHub issues for undocumented configuration scenarios.

Kodus AI pros

- Agent-based architecture: Represents the next paradigm in AI code review with active development backing it.

- Rapid release cadence: 129 releases and 3,018 commits indicate sustained engineering investment.

- Self-hosted option: Addresses data sovereignty requirements.

Kodus AI cons

- Documentation gaps: Polyglot capabilities and production-scale results need more documentation.

- Growing adoption: 976 stars is promising, but still limited compared to SonarQube or Tabby.

- TypeScript monorepo specifics: Language coverage details for non-TypeScript codebases need verification.

Pricing

- Software: Free, open source

- Evaluation time: Budget extended periods for experimentation

- Alternative: CodeRabbit at $12/user/month (Lite) for proven commercial functionality; Augment Code for enterprise teams that need architectural context across large, complex codebases

Verdict on Kodus AI

Choose Kodus AI if an actively developed, agent-based approach with self-hosted deployment appeals to you and you are tolerant of evolving documentation.

Skip it if: Immediate production reliability with comprehensive documentation for a specific language stack is required.

10. snarktank/ai-pr-review

Ideal for: Teams already using Anthropic's Claude models or Amp for development workflows, organizations that prefer Claude's code analysis capabilities, and developers seeking GitHub Actions integration with Anthropic's ecosystem.

snarktank/ai-pr-review provides GitHub Actions integration specifically designed for teams using Anthropic's Claude models.

What was the testing outcome?

The tool's real-world utility is limited by how early-stage the project still is.

It leverages Claude's code analysis capabilities. With 57 stars, 6 forks, no formal releases, and only 2 contributors, this is an experimental project. No verifiable presence was found in broader discussions within the developer community. The minimal contributor base and the lack of formal releases pose significant sustainability risks.

What's the setup experience?

Existing access to the Amp or Claude Code APIs is required. Infrastructure costs depend on deployment approach: API-based tools require only per-request costs.

snarktank/ai-pr-review pros

- Claude model quality: Leverages Claude's strong code analysis capabilities.

- MIT license: Full customization and contribution rights.

- Anthropic ecosystem integration: Natural fit for teams already using Claude.

snarktank/ai-pr-review cons

- Minimal adoption: 57 stars, no releases, only 2 contributors. Not suitable for enterprise use.

- No formal releases: Version management and upgrade paths are undefined.

- API dependency: Requires Claude Code or Amp access.

Pricing

- Software: Free, MIT license

- API costs: Anthropic Claude API pricing applies

- Prerequisites: Existing Amp or Claude Code access required

Verdict on snarktank/ai-pr-review

Choose it if: The team is already in the Anthropic ecosystem, wants to experiment with Claude-powered GitHub Actions, and accepts the risks of early-stage software.

Skip it if: Production reliability, formal release management, or broader community support is required.

Decision Framework: Choosing the Right Tool

Choosing the right open source AI code review tool depends less on feature checklists and more on your specific constraints.

💡 Pro Tip: Start with your primary constraint:

| If your constraint is... | Choose... | Because... |

|---|---|---|

| Complete data sovereignty | Tabby or PR-Agent with Ollama | Zero external API calls, note PR-Agent config bugs |

| Already on GitHub Advanced Security | CodeQL | Native integration, sophisticated analysis |

| GitLab-native team | Hexmos LiveReview | Built for GitLab, not bolted on |

| Custom security rules | Semgrep | Pattern-based flexibility |

| Proven stability over AI features | SonarQube Community Edition | 20+ years battle-tested |

| Quick experimentation | villesau/ai-codereviewer | Fastest setup, highest adoption, but stale |

| Anthropic ecosystem | Anthropic ecosystem snarktank/ai-pr-review | Claude model quality, experimental only |

| Agent-based review | Kodus AI | Active development, self-hosted option |

Constraint #1: Data Sovereignty

For security-sensitive teams requiring zero external API calls, evaluate Tabby or PR-Agent with Ollama. Both require at least 8GB of VRAM for local model inference. Note that PR-Agent's Ollama integration has an unresolved configuration bug (Issue #2098) that can cause the agent to ignore local endpoint settings and default to OpenAI models, a known blocker for air-gapped deployments as of early 2026. Expect multi-week deployment timelines for either option.

PR-Agent has documented configuration issues where tools default to hardcoded models despite environment variables. These remain unresolved after 4+ months. Budget extra debugging time and monitor the issues for resolution.

Constraint #2: Team Size and Budget

Commercial platforms like CodeRabbit ($12/user/month Lite tier) offer lower adoption costs for smaller teams. Per DX's enterprise ROI analysis, small teams (50 to 200 developers) should expect a total investment of $100K to $500K for self-hosted AI tooling, with a 12 to 18-month payback period. The cost crossover at which self-hosting becomes competitive depends heavily on GPU hardware choices and team size. For enterprise teams where the real cost driver is architectural breakage rather than tooling spend, Augment Code’s Context Engine addresses this at scale without the burden of self-hosting.

Constraint #3: Cross-Service Architecture

Traditional file-level review tools miss breaking changes across service boundaries. When these tools were evaluated for microservice architectures with 47+ service dependencies, context-aware solutions outperformed file-isolated alternatives by a significant margin.

When Augment Code's Context Engine was tested on the same monorepo, it identified architectural drift across service boundaries that every open-source tool on this list missed. The Context Engine processes 400,000+ files through semantic dependency analysis, achieving 70.6% SWE-bench accuracy and 59% F-score in code review quality, precisely because it maintains architectural context rather than reviewing files in isolation.

Start with Established Quality Gates, Then Layer Context

Open source AI code review tools provide genuine value in specific contexts: data sovereignty requirements, cost-constrained experimentation, or complementing existing static analysis pipelines. The key is to match tool capabilities to actual constraints rather than to adopt based on feature lists.

Start with SonarQube Community Edition as the foundation for established quality gates. Add Tabby or PR-Agent with Ollama for self-hosted AI capabilities if data privacy requires it. Budget for appropriate evaluation periods: initial adoption excitement typically fades after several months, after which real friction becomes visible.

Context-aware AI code review represents a fundamental shift from lint-level feedback to architectural understanding. Engineering teams evaluating AI code review should prioritize comprehensive context analysis and semantic dependency mapping over feature checklists.

Augment Code's Context Engine identifies architectural violations and breaking changes across 400,000+ files through semantic analysis, achieving 70.6% SWE-bench accuracy and 59% F-score in code review quality.

Context-aware AI code review represents a fundamental shift from lint-level feedback to architectural understanding. Engineering teams evaluating AI code review should prioritize comprehensive context analysis and semantic dependency mapping over feature checklists.

Augment Code's Context Engine identifies architectural violations and breaking changes across 400,000+ files through semantic analysis, achieving 70.6% SWE-bench accuracy and 59% F-score in code review quality.

For teams ready to move beyond file-level review entirely, Intent provides the orchestration layer that makes code review spec-driven. Rather than reviewing diffs in isolation, Intent’s Verifier agent validates each implementation against the living specification that defines API contracts, architectural patterns, and acceptance criteria. Combined with the Context Engine’s cross-repository awareness, this catches the class of bugs that no open source tool in this list can detect: architectural drift across service boundaries.

Test architectural context analysis on your enterprise monorepo.

Free tier available · VS Code extension · Takes 2 minutes

Related Guides

Written by

Molisha Shah

GTM and Customer Champion