In my February to March 2026 hands-on testing on complex team codebases, Intent by Augment Code stood out for keeping multiple agents aligned because its living spec acts as a shared, continuously updated plan.

TL;DR

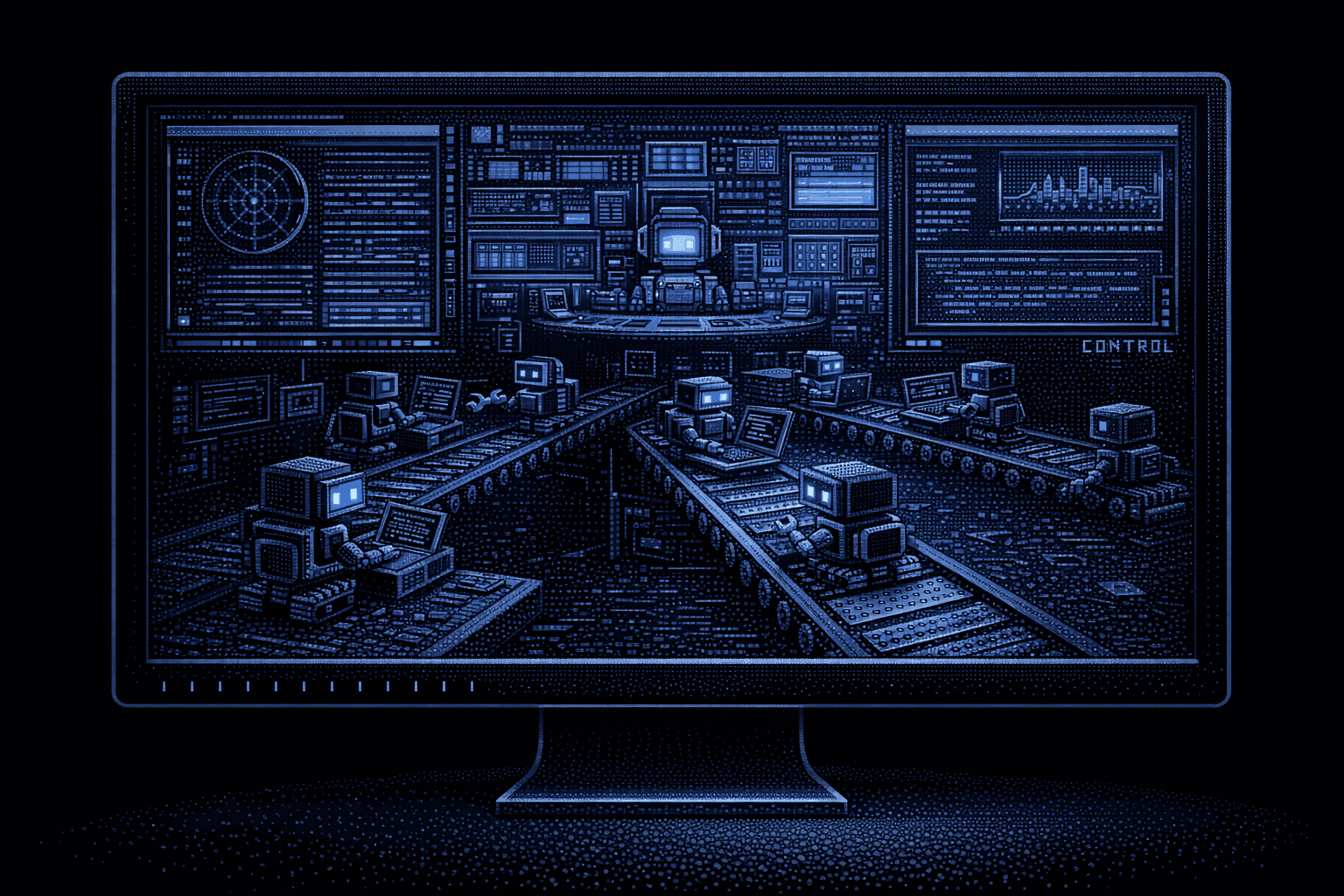

AI coding agents are moving out of IDE sidebars into desktop apps, CLIs, and cloud sandboxes, which makes long-horizon tasks and parallel agents easier to run and monitor. I tested Intent, Codex Desktop App, Sculptor, Claude Code, and Devin on production repos; Intent required the least manual reconciliation when parallel work touched shared contracts.

Testing note: The conclusions labeled "in my testing" below are subjective observations from running these tools against private, production-scale repositories during February-March 2026. They're useful for understanding workflow feel and coordination behavior, but they are not vendor-published benchmarks.

In my testing, Intent's living spec plus its codebase-wide context approach produced consistently coordinated multi-agent workflows across these five tools on large, coupled codebases, particularly when parallel work touched shared contracts and cross-service boundaries. The Intent blog post and product docs describe the mechanics in more detail.

See how Intent's living spec and multi-agent orchestration handle complex codebases.

Free tier available · VS Code extension · Takes 2 minutes

Why AI Coding Agents Are Moving Beyond IDE Sidebars

In 2025-2026, multiple vendors shipped developer agents with terminal-first, desktop-first, or cloud-first "mission control" surfaces, then layered in IDE integrations later. OpenAI's Codex app, Anthropic's Claude Code, and Imbue's container-first Sculptor all follow that pattern.

Here are the three moves that best illustrate the shift:

- OpenAI positions the Codex app as a command center for managing largely autonomous coding agents executing long-horizon tasks, with sandboxing enforced via local and OS-native mechanisms in the desktop app.

- Anthropic ships Claude Code as a CLI-first agent with optional IDE extensions.

- Augment Code launched Intent as a desktop workspace for multi-agent orchestration centered on a living spec.

Across these releases, I saw a move from "AI inside your IDE" toward "AI as the development platform," with one surface for spawning and monitoring agents and another for reviewing and integrating diffs. This is an interpretation of vendor positioning and product UX; for a neutral benchmark that reflects repo-level, multi-file tasks, see SWE-bench. For background on how this shift relates to the broader category of agentic development environments, the distinction from traditional IDE extensions is worth understanding.

In my experience, the form factor matters a lot once you add parallel agents or longer task horizons. IDE extensions tend to constrain agents to single context windows and synchronous, editor-bound interactions, while desktop apps and CLI tools make it easier to run parallel work and monitor long-running jobs.

Here is how these five tools compare across the dimensions that matter most to professional engineers.

Quick Comparison: 5 AI Coding Agent Desktop Apps at a Glance

Before diving into individual reviews, this table captures the architectural differences I observed across all five tools. Platform and pricing details below are sourced from official documentation where available.

| Dimension | Intent | Codex Desktop App | Sculptor | Claude Code | Devin |

|---|---|---|---|---|---|

| Form factor | macOS desktop app | macOS + Windows desktop app | macOS + Linux desktop app | CLI + IDE extensions | Cloud web app |

| Execution model | Local desktop, multi-agent | Cloud containers (OpenAI-managed) + local OS sandbox, parallel | Docker containers, parallel | Local terminal, subagents | Remote cloud, autonomous |

| Agent orchestration | Coordinator + Specialists + Verifier with living spec | Parallel agents in isolated sandboxes | Containerized agents with IDE sync | Configurable specialized agents (including subagents) defined via settings and commands | Single autonomous agent |

| Model flexibility | BYOA + Augment agents | GPT-5.3-Codex, GPT-5.4 (Codex model family) | Anthropic account required (per docs) | Anthropic models (Claude) | Proprietary service |

| Context handling | Shared living spec + Context Engine | Per-sandbox context | Per-container context | Per-session context window | Cloud-hosted workspace context |

| Pricing | Augment credits or BYOA workspace | Included with ChatGPT plans (promotional) | No commercial pricing published (preview) | Subscription tiers | Subscription + usage-based compute |

| Best for | Multi-agent coordination on complex codebases | Long-running parallel tasks across repos | Safe concurrent agent execution | Terminal-first developers | Async task delegation |

1. Intent by Augment Code: Spec-Driven Multi-Agent Orchestration

Platform: macOS (public beta, February 2026); Windows waitlist

Pricing: Augment credits, or a BYOA workspace (Context Engine features require an Augment subscription)

Best for: Teams coordinating multiple agents on complex, multi-service codebases

Intent is a standalone macOS desktop application built for spec-driven development with multi-agent orchestration. It is a purpose-built workspace where multiple AI coding agents share a living spec and coordinate around a single evolving plan.

The Living Spec: Why Coordination Beats Parallelism

The living spec is what separates Intent from other tools in this comparison. When I ran multi-agent tasks in Claude Code or Codex, each agent typically operated with its own prompt and partial context; when one agent's output affected another's task, reconciliation became a manual "glue" step.

Intent's approach makes coordination explicit via a shared document that acts as a canonical source of truth, reducing prompt drift, stale assumptions, and conflicting parallel work in multi-agent setups. In practice, that meant:

- When an agent completes a task, the spec updates to reflect what was actually built

- When requirements change mid-run, updates propagate to agents that haven't started yet

- All agents read from and write to the same spec

This spec-driven approach is a meaningful departure from prompt-based workflows where each agent operates on its own interpretation of the task.

Three-Tier Agent Architecture

Intent implements a structured three-tier agent architecture: Coordinator, Specialists, and Verifier. Planning, execution, and validation stay aligned to the living spec through explicit orchestration, so agents converge as the plan evolves without requiring the developer to constantly restate context in each agent thread.

Beyond orchestration, Intent consolidates tools that developers typically switch between: a built-in Chrome browser for previewing local changes, full git workflow integration from staging through PR creation and merging, and resumable sessions that persist workspace state across sessions. These features mean the entire workflow from spec to merge happens in one surface.

BYOA: Use Your Existing Subscriptions

Intent supports a bring-your-own-agent (BYOA) model. In a BYOA setup, you can use external agents like Claude Code, Codex, or OpenCode inside Intent's workspace while still benefiting from the living spec and orchestration layer.

The important nuance is that BYOA users get the workspace and orchestration experience, but the Context Engine requires an Augment subscription. With the Context Engine active, agents gain semantic understanding of service boundaries, API contracts, and dependency relationships across the full codebase. In my testing, the Coordinator's task decomposition felt more precise on tightly coupled repos when the Context Engine was enabled; I attribute that to stronger awareness of which parts of the codebase were actually coupled.

Model Flexibility

Intent supports mixing models based on task requirements: Opus for complex architecture and planning, Sonnet for rapid iteration, and GPT 5.2 for deep code analysis and code review. This flexibility means you can assign the right model to each agent role rather than running everything through a single provider.

Integrations

Intent integrates with GitHub, Linear, and Sentry, and includes themes, granular agent configuration, and activity feeds for tracking agent progress across tasks.

Limitations I Observed

These are the main constraints I ran into, or could not validate from public docs, during testing:

- macOS only today; Windows is on a waitlist with no published timeline

- No published performance benchmarks (completion rates, speed comparisons versus competing tools) in the launch materials

- Enterprise features (SSO, audit logs, compliance certifications) are not publicly documented as of the public beta

- Public beta stage means limited third-party production case studies

The trade-off is clear: Intent is optimized for coordination and shared truth, but the product is still early in its public rollout.

Who Should Choose Intent

Intent was the strongest fit in my testing for engineering teams working on multi-service architectures where cross-agent coordination is the bottleneck. The living spec plus codebase-aware context tooling targets a common failure mode: parallel agents produce conflicting changes across coupled services.

If your codebase involves shared validation libraries, cross-service event contracts, or configuration inheritance patterns, Intent's coordinated approach can reduce manual reconciliation versus running independent agents in parallel. For a closer look at how Intent's coordination model compares to individual tools, see the dedicated Intent vs Codex, Intent vs Claude Code, and Intent vs Devin comparisons.

2. OpenAI Codex Desktop App: Cloud Sandbox Parallel Execution

Platform: macOS (Feb 2, 2026), Windows (Mar 4, 2026)

Pricing: Included with ChatGPT plans (promotional)

Best for: Running multiple long-horizon tasks in parallel across different repositories

The Codex app is OpenAI's interface for managing autonomous coding agents executing long-horizon tasks in isolated cloud sandboxes.

Cloud Sandbox Architecture

The Codex app supports three execution modes: local (directly in your project directory), worktree (isolated Git worktree), and cloud (OpenAI-managed containers). Cloud tasks get their own isolated sandbox, and tasks can run for more than 24 hours uninterrupted, even when your laptop is offline. Multiple agents can run in parallel across different repositories without local resource conflicts.

This is the right architecture for a specific use case: parallelizing substantial, well-defined work across multiple repositories.

Model Family

Codex currently runs on the GPT-5.3-Codex model, with GPT-5.4 now the recommended default for most tasks. GPT-5.4 incorporates the coding capabilities of GPT-5.3-Codex alongside stronger reasoning and tool use. Model availability is tracked in the official Codex changelog.

Integrations

The desktop app supports GitHub workflows and MCP (Model Context Protocol) support as documented in the Codex changelog.

Limitations I Observed

These are the main trade-offs I ran into while using Codex Desktop App, plus a few gaps I could not confirm in public docs:

- No BYOA model flexibility: Locked to Codex-supported models in the GPT-5 family; no bring-your-own-agent support

- Sandbox isolation is real: By design, cloud sandboxes are separated from your local machine (including local dev caches), which can change how dependency setup feels on some stacks

- No documented coordination artifact: I did not see a shared coordination artifact (like a living spec) for keeping parallel agents aligned across tasks

- Pricing uncertainty: OpenAI describes current plan inclusion as promotional and does not announce a permanent post-promotional structure

Who Should Choose Codex Desktop App

Codex Desktop App is a strong choice for developers who need to parallelize long-running tasks across multiple repositories and are comfortable staying inside OpenAI's Codex model and tooling family. The cloud sandbox model means low local resource consumption and true background execution.

3. Sculptor by Imbue: Containerized Agent Infrastructure

Platform: macOS Apple Silicon (primary), macOS Intel (experimental), Linux (AppImage); no Windows support documented

Pricing: No commercial pricing published

Best for: Safely running multiple concurrent agents in isolated Docker containers with bidirectional IDE sync

Sculptor occupies a unique niche in this comparison. Built by Imbue, Sculptor is a containerized infrastructure for running and coordinating AI coding agents, rather than a coding agent itself.

Each agent runs in an isolated Docker container, preventing agents from interfering with each other or destabilizing the developer's local environment.

Pairing Mode: Bidirectional IDE Sync

Sculptor's defining feature is Pairing Mode, which bidirectionally syncs changes between the agent's container and the developer's local IDE in real time.

In my testing, that meant I could continue using VS Code while agents executed code in isolated containers, with changes propagating in both directions without manual copy and paste.

Docker Dependency and Model Support

Sculptor requires Docker Desktop to run, which is a meaningful prerequisite in enterprise environments with containerization policies.

On model support, the repository states you'll need an Anthropic account to use Sculptor, suggesting Claude as the primary supported path in current public docs.

Limitations I Observed

Here are the main constraints I ran into, based on testing and what is publicly documented:

- Docker Desktop required: Adds infrastructure complexity

- Limited model support documented: Anthropic-account requirement is explicit; broader multi-provider support is not clearly documented

- No commercial pricing clarity: Public materials describe the product but not a stable commercial plan or enterprise licensing path

- No shared coordination document: Sculptor docs focus on isolation and sync; I did not see a shared spec or coordination artifact described

The upside is operational safety; the downside is that higher-level orchestration is still on you.

Who Should Choose Sculptor

Sculptor is the right choice for developers who want the safety of containerized execution without giving up their existing IDE workflow. If the primary concern is "I want to run multiple agents in parallel without them corrupting my local environment or each other," Sculptor solves that problem cleanly.

4. Claude Code by Anthropic: CLI-First Ecosystem

Platform: Terminal and CLI (primary), VS Code extension (beta), JetBrains extension (beta), web (claude.ai)

Pricing: Subscription tiers (Pro/Max)

Best for: Terminal-first developers comfortable orchestrating agents from the command line

Claude Code is Anthropic's CLI-first coding agentic tool, with IDE extensions layered on top. It straddles the terminal and IDE ecosystem rather than providing a standalone workspace with its own orchestration UI.

For this comparison, I include it because Claude Code's agentic capabilities (subagents, hooks, background tasks) make it a direct alternative to desktop agent apps for many workflows.

Agentic Capabilities (September 2025)

Anthropic's autonomous capabilities update shipped alongside the terminal v2.0 refresh and added:

- Subagents: Delegate specialized tasks in parallel

- Hooks: Automatically trigger actions at specific points

- Background tasks: Keep long-running processes active without blocking progress

- Programmatic Tool Calling (PTC): Claude writes Python to orchestrate multiple tool calls in loops and conditionals

PTC results and benchmark improvements are reported in Anthropic's engineering write-up.

Vendor-Reported Validation

Anthropic's Claude 4 announcement includes partner quotes and customer anecdotes about longer autonomous refactors. These are useful signals about adoption, but they are not independent benchmarks.

Limitations I Observed

Here are the constraints that mattered most in day-to-day use:

- No standalone orchestration UI: Developers managing multi-agent workflows need to build their own orchestration layer

- Anthropic model family only: Claude Code runs on Anthropic's Claude models and does not present a multi-provider BYOA story

- No persistent cross-session artifact: Each session has its own context window; I did not see a shared, persistent coordination artifact across sessions in public product materials

Who Should Choose Claude Code

Claude Code is the right choice for terminal-native developers who want maximum agentic capability without leaving their existing workflow. The subagent and hooks system provides practical multi-agent orchestration from the command line.

Explore how Intent's spec-driven coordination compares to running parallel agents independently.

Free tier available · VS Code extension · Takes 2 minutes

5. Devin by Cognition AI: Cloud-Based Autonomous Agent

Platform: Cloud web app (browser-based)

Pricing: Subscription + usage-based compute

Best for: Async task delegation for well-defined, repetitive engineering tasks

Devin is the comparison point for what a fully cloud-based autonomous agent looks and feels like versus desktop-native tools. Developers interact through a web interface and integrations, while Devin executes tasks in Cognition's remote cloud infrastructure.

The Async Delegation Model

Devin's workflow is inherently asynchronous: submit a task, wait for autonomous completion, review results as a pull request. Devin follows a structured plan, code, test, debug, submit loop.

Cognition's own 2025 self-assessment notes: "While human engineers tend to cluster around a level, Devin is senior-level at codebase understanding but junior at execution."

The Cloud Trade-Off

Devin's cloud-only model means developers typically cannot use their exact local tooling setup during execution, and the workflow is naturally review-driven rather than "edit as it runs."

Limitations I Observed

These are the downsides that showed up most clearly in practice:

- No desktop presence: Entirely cloud-dependent; cannot work offline

- Review-latency workflow: Optimized for assign, wait, review, which is a mismatch for tasks that require tight interactive steering

- Environment control trade-off: Execution is managed by Cognition's service; I did not see documentation for local-level runtime customization comparable to container-based workflows

- Struggles with ambiguity: Cognition notes that Devin performs worse with mid-task requirement changes

Who Should Choose Devin

Devin is the right choice for teams comfortable treating an AI agent like a junior engineer: assign well-scoped tasks, review pull requests, merge approved work. For migration and refactor work with clear specifications, Devin's autonomous execution can be productive. For developers who want real-time oversight or need to work in offline or air-gapped environments, desktop-native tools are architecturally better fits.

Why the Shift From IDE Extensions to Desktop Apps Matters

The move from IDE-embedded AI extensions to standalone AI coding agent desktop apps is driven by architectural constraints that show up once you push beyond short, single-thread tasks. Here are the four drivers that mattered most in my testing and while reading vendor architectures:

- Multi-agent orchestration needs dedicated infrastructure. Desktop and CLI-first tools expose orchestrator concepts like subagents and role separation, which are harder to express as a single IDE sidebar interaction.

- Task horizons expanded from minutes to hours (and longer). Cloud sandboxes and background tasks make it practical to run long jobs without keeping a local editor session alive.

- Agent management and code editing benefit from separate interfaces. Tools like Codex and Intent treat "run and monitor agents" as a first-class UI surface, separate from reviewing diffs and merging.

- Sandboxed execution requires desktop or cloud infrastructure. IDE plugins usually cannot provide isolated execution environments; cloud sandboxes (Codex) and containerized environments (Sculptor) let agents execute code and iterate without risking your primary dev environment.

Security is part of this shift, too. Tool-using agents increase the importance of treating repository content and external inputs as potentially untrusted, and of keeping humans in the review loop. For threat overviews that include prompt injection and tool abuse risks, see OWASP LLM and NIST AI.

Choosing the Right AI Coding Agent Desktop App for Your Team

The right AI coding agent desktop app depends on your architecture, workflow preferences, and coordination requirements. The table below maps common needs to the tool that addresses them.

| If you need... | Choose... | Because... |

|---|---|---|

| Multi-agent coordination with shared context | Intent | Living spec can reduce conflicting work across coupled services by keeping agents aligned to one plan |

| Long-running parallel tasks across repos | Codex Desktop App | Cloud sandboxes support long-horizon execution and parallelization |

| Safe containerized execution with IDE sync | Sculptor | Docker isolation with bidirectional Pairing Mode preserves your existing IDE workflow |

| Terminal-first agentic capability | Claude Code | Subagents, hooks, and PTC provide orchestration from the command line |

| Async autonomous task delegation | Devin | Autonomous plan-code-test-debug-submit cycle optimized for review-driven work |

Choose the Coordination Model Before You Standardize on an Agent Workspace

If you are standardizing an agent workspace for a team, I would start by deciding whether your main failure mode is coordination (conflicting edits across coupled services) or throughput (lots of independent tasks that can run in parallel). Then run a short pilot on one representative "blast radius" change, for example a cross-service contract update that touches code, tests, and docs.

When coordination is the bottleneck, a shared artifact like Intent's living spec can reduce rework because agents continuously converge on the same evolving truth. When throughput is the bottleneck, Codex-style isolated parallelism can be a better fit.

Install Intent and test spec-driven coordination on your own codebase.

Free tier available · VS Code extension · Takes 2 minutes

in src/utils/helpers.ts:42

FAQ

Related

Written by

Molisha Shah

GTM

Molisha is an early GTM and Customer Champion at Augment Code, where she focuses on helping developers understand and adopt modern AI coding practices. She writes about clean code principles, agentic development environments, and how teams are restructuring their workflows around AI agents. She holds a degree in Business and Cognitive Science from UC Berkeley.