We optimized software development for speed for twenty years. Now AI agents can prototype faster than any team ever could, and the bottleneck has flipped. The constraint used to be "can we build it." Now it's "do we know what we're building."

This changes our understanding of the purpose of specs. They aren’t canonical truths, but something different. Specs are hypotheses you can test, run in parallel, and throw away. And this is the first time software engineers have been able to work this way, at scale.

The cost that held us back

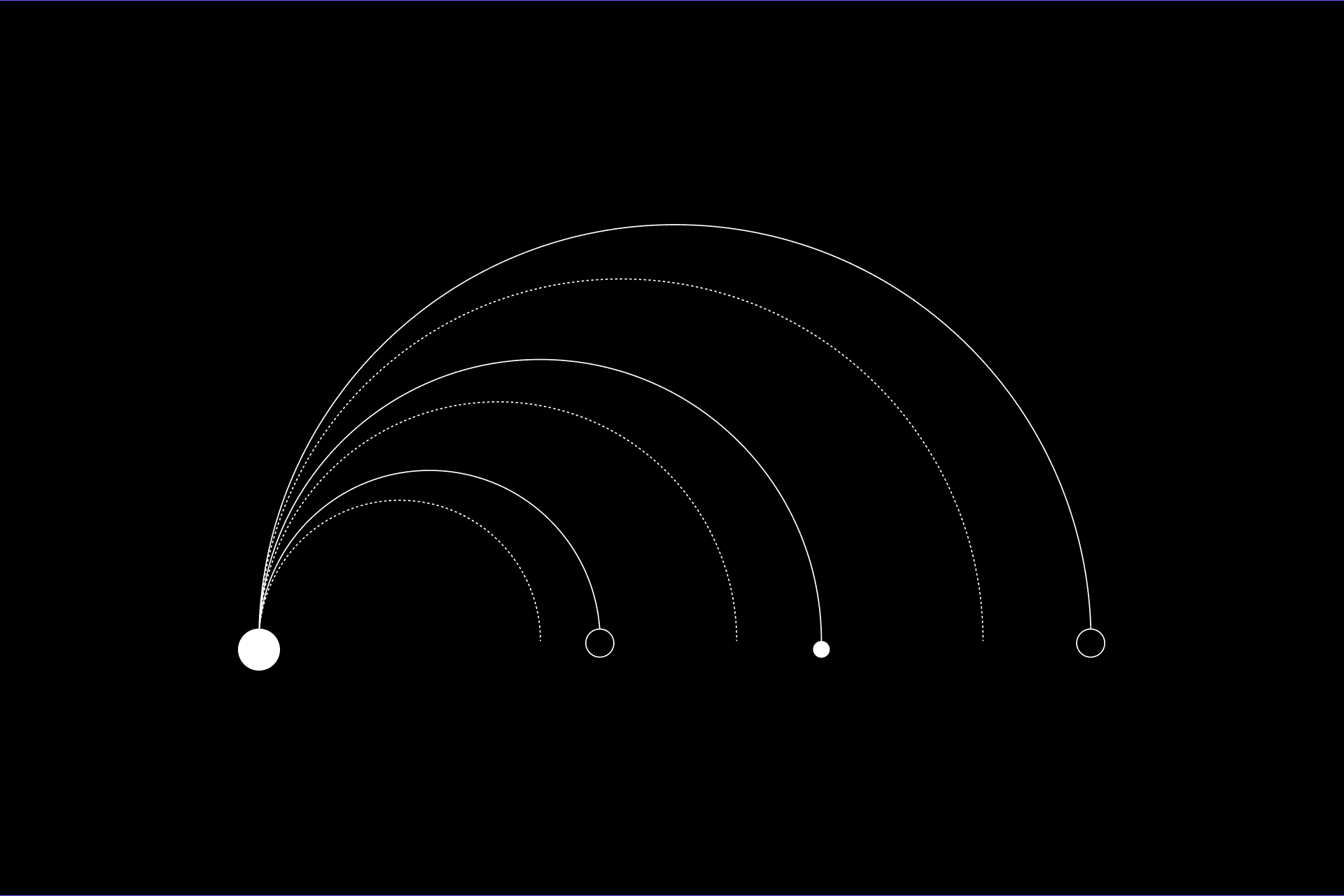

There's a framework in design thinking called the Double Diamond. Two phases: first you diverge, exploring broadly and generating options, then you converge, narrowing to the best solution.

The Double Diamond maps creative work as two cycles of diverge-then-converge. Software teams have always skipped the first diamond because they couldn't afford it.

Designers have used this for decades. Software teams never really could, and the reason is cost. Writing one implementation takes weeks, so you can't afford to build three approaches and compare them. Teams converge on a direction: one spec, one architecture, one shot. Pick something plausible and commit before you can evaluate alternatives. This is why so many architecture decisions feel arbitrary in retrospect. It wasn’t that the team lacked creativity, it was just that they couldn't afford to explore.

A Stanford study on parallel prototyping is worth reading here. Teams who built multiple prototypes before committing produced better designs and felt more confident in their final choices than teams who refined a single prototype. Not surprising, it just wasn’t viable from a time or human capital perspective before.

Agents change the cost structure at the prototype level: working code in hours rather than weeks, against your actual codebase rather than a toy example. In January, Jaana Dogan, a principal engineer at Google, posted that she'd used Claude Code to replicate a distributed agent orchestrator her team had spent a year building. It took an hour. The nuance she added two days later, after the predictable internet reaction, is the part worth paying attention to: the team had spent that year building multiple versions, each with different tradeoffs, and she fed all of that accumulated thinking to the agent. What collapsed into an hour was the execution. The year of architectural reasoning is what made it possible. When exploration is cheap, you stop guessing and start testing.

What this looks like

Same effort, radically different information surface. The old way bets everything on one spec being right. The new way runs the experiment first.

Say you're adding a permissions system to a product that's outgrown its original RBAC model. Multi-tenant customers are hacking around limitations and the security team is nervous.

The old way: weeks of architecture discussions, one RFC, arguments in Slack, and eventually someone picks ABAC because they read a blog post. You build it, discover during code review that it doesn't handle the customer hierarchy edge case, and ship anyway with a TODO.

The new way: write four specs. One extends the existing RBAC with finer-grained roles. One migrates to full ABAC. One proposes a hybrid. One explores a policy engine that externalizes all authorization logic. Agents build all four against your actual codebase and then you evaluate with real criteria: which approach handles multi-tenant hierarchy cleanly? Which introduces the fewest migration risks? Which can your team actually maintain in six months?

You made the decision with evidence. Sometimes three of the four specs turn out to be dead ends, but you learned that in two days instead of two quarters, and you learned it before committing to six months of implementation.

The skill that matters now

For a long time, developer productivity meant translating intent into code faster. Better editors, better languages, better frameworks, all optimized for execution speed. That's the constraint that's going away, at least at the prototype level.

The scarce resource has always been clarity: knowing what to build, defining boundaries, making implicit knowledge explicit, recognizing failure modes before they become production incidents. Execution just used to be scarce too, so we optimized for that instead and mostly ignored the clarity problem.

I've accepted agent suggestions that compiled, passed lint, and still missed the point because I hadn't been clear enough about what I actually wanted. The problem wasn't capability. The agents we have today are the worst agents we'll use for the rest of 2026, and every model improvement makes execution more reliable. But none of that helps if you don't know what to ask for.

We can find clarity with more exploration. Write ten specs. Test them all. Throw away nine. The one you keep will be better than anything you would have designed by committee over three weeks of Slack arguments, because you'll have tested it against the real alternatives, not the hypothetical ones.

Written by

Sylvain Giuliani

Sylvain Giuliani is the head of growth at Augment Code, leveraging more than a decade of experience scaling developer-focused SaaS companies from $1 M to $100 M+ ARR. Before Augment, he built and led the go-to-market and operations engines at Census and served as CRO at Pusher, translating deep data insights into outsized revenue gains.